12 Dec 2024

We are thrilled to announce that MDAnalysis has been awarded a Small Development Grant by NumFocus to enhance scientific molecular rendering with MolecularNodes in 2025. This initiative is a collaborative effort between Yuxuan Zhuang and Brady Johnston.

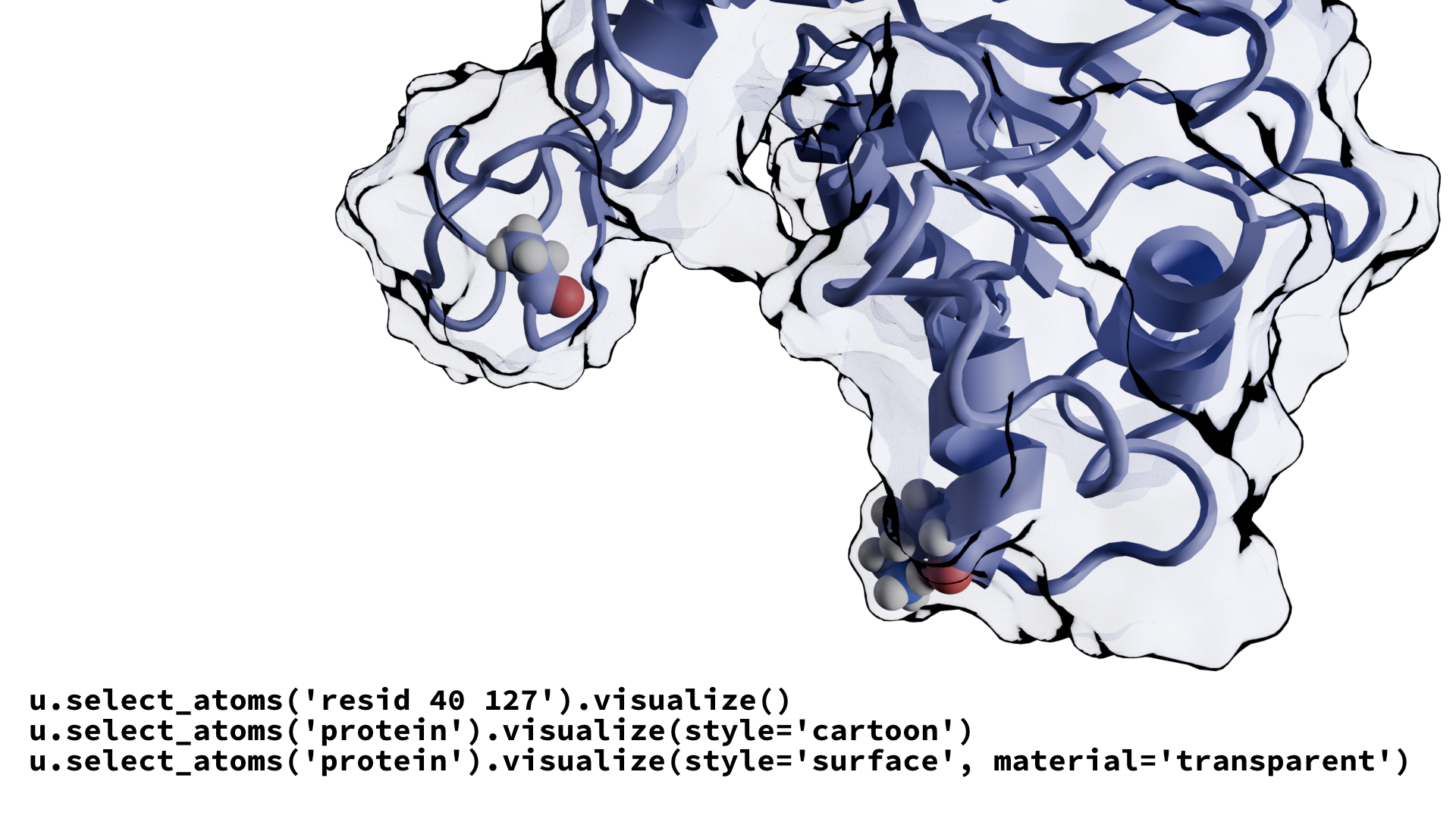

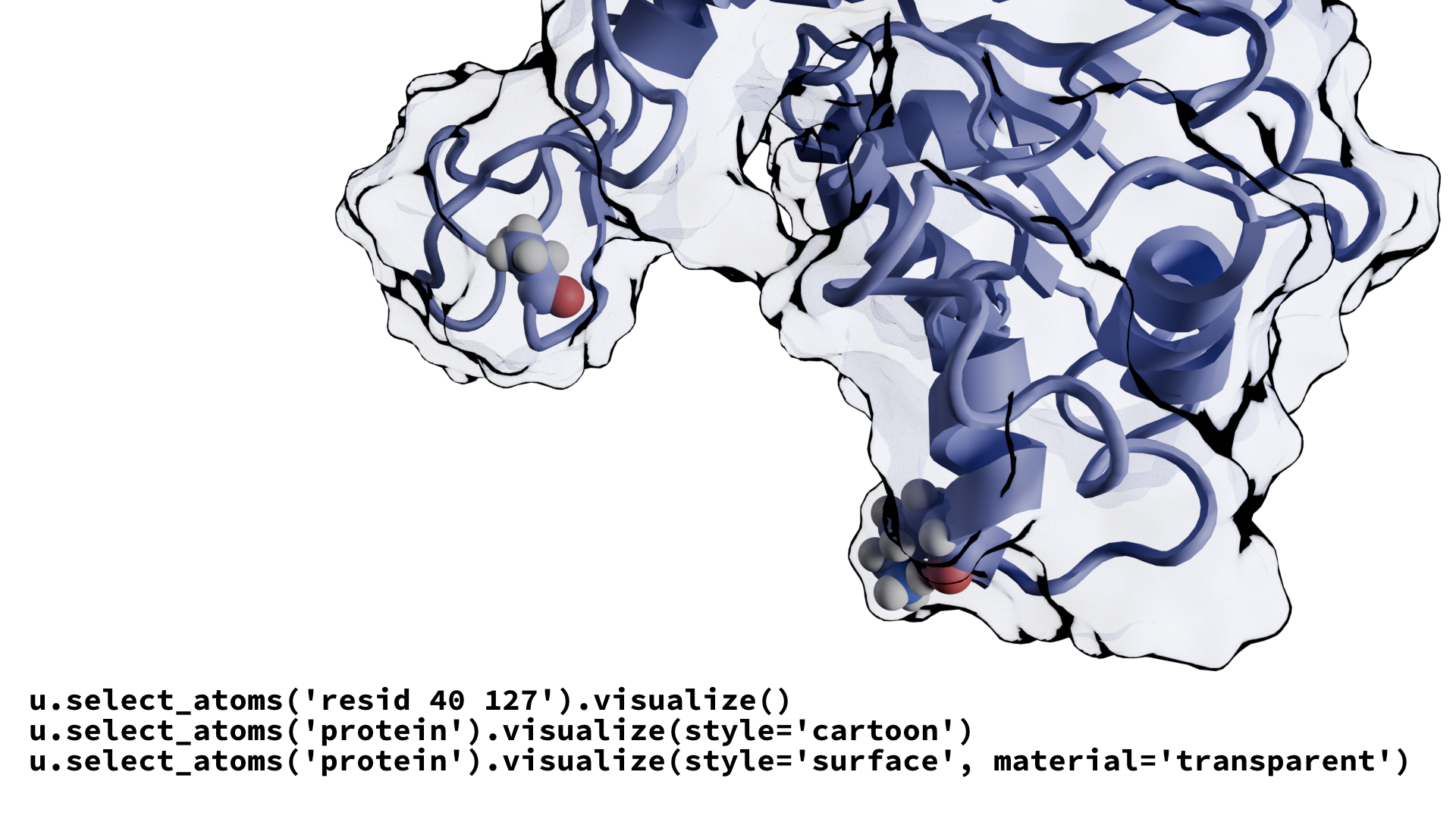

MolecularNodes facilitates the seamless import and visualization of structural biology data within Blender, leveraging Blender’s industry-leading visualization and animation tools. Molecular Nodes has garnered widespread excitement among scientists for its ability to create stunning and informative molecular visualizations. The current version of Molecular Nodes has an under-developed scripting interface, inhibiting the potential for automated molecular rendering. Our project aims to address these limtiations by developing a robust API, enabling users to render molecular structures with straightforward and customizable code.

Project Overview

The development will proceed in three key stages:

-

API Development: We will create a stable API for Molecular Nodes, empowering users to automate molecular rendering with minimal effort.

-

Interactive Jupyter Integration: A Jupyter widget will be built to integrate with MDAnalysis, providing an interactive environment for controlling and rendering molecular objects directly within notebooks via Blender.

-

Advanced Visualization Tools: We will develop tools for visualizing basic geometric features and even complex analysis results from MDAnalysis.

Be Part of the Process!

We invite you to join our Discord channel to share your ideas and feedback as we build these tools. If you’d like to be a beta user, let us know—-your input will help shape the future of Molecular Nodes! Stay tuned for updates and sneak peeks of our progress.

Thank you to NumFocus for supporting this exciting project!

22 Nov 2024

We are happy to release version 2.8.0 of MDAnalysis!

This is a minor release of the MDAnalysis library, which means that it

contains enhancements, bug fixes, deprecations, and other

backwards-compatible changes.

However, in this case minor does not quite do justice to what is

happening in this release, given that we have (at least) three big

changes/additions:

- The license was changed to the GNU

Lesser General Public License so that MDAnalysis can be used by

packages under any license while keeping the source code itself

free and protected.

-

We introduce the Guesser API for

guessing missing topology attributes such as element or mass in a

context-dependent manner. Until release 3.0, you should not

notice any differences but under the hood we are getting ready to

make it easier to work with simulations in a different context

(e.g., with the MARTINI force field or experimental PDB

files). With consistent attributes, such as elements, it becomes a

lot easier to interface with tools like the cheminformatics RDKit

(via the converters).

The guessers are the GSoC 2022 project of @aya9aladdin with

help from @lilyminium, @IAlibay, and @jbarnoud.

-

We are introducing parallel analysis for tools in

MDAnalysis.analysis following the simple

split-apply-combine paradigm that we originally prototyped in

PMDA . What’s really exciting is that any analysis code

that is based on MDAnalysis.analysis.base.AnalysisBase can

enable parallelization with a few lines of extra code—all the

hard work is done behind the scenes in the base class (in a way

that is fully backwards compatible!).

This new feature is the work of @marinegor who brought his

GSoC 2023 project to completion, with great contributions by

@p-j-smith, @yuxuanzhuang and @RMeli .

Not all MDAnalysis analysis classes have parallelization enabled

yet but @talagayev has been working tirelessly on already updating

GNMAnalysis, BAT, Dihedral, Ramachandran, Janin, DSSP

(yes, MDAnalysis has finally got DSSP, based on pydssp, also

thanks to @marinegor), HydrogenBondAnalysis, in addition to

RMSD.

Read on for more details on the license change and the usual

information on supported environments,

upgrading your version of MDAnalysis, and a

summary of the most important changes.

License change to LGPL

This is the first release of MDAnalysis under the Lesser General

Public License. We have been working towards this license change for

the last 3 years; this release (almost) concludes the process that we

described in our licensing update blog post.

-

All code is now under LGPLv2.1

license

or any higher version.

- The package is under the LGPLv3

license or any higher

version. However, once we have removed dependencies that prevent

licensing under LGPLv2.1+ at the moment, we will also license the

package under the same LGPLv2.1+ as the code itself.

We would like to thank all our contributors who granted us permission to

change the license. We would also like to thank a number of

institutions who were especially supportive of our open source

efforts, namely Arizona State University, Australian National

University, Johns Hopkins University, and the Open Molecular Science

Foundation. We are also grateful to NumFOCUS for legal

support. The relicensing team was lead by @IAlibay and @orbeckst.

Supported environments

The minimum required NumPy version is 1.23.3; MDAnalysis now builds

against NumPy 2.0.

Supported Python versions: 3.10, 3.11, 3.12, 3.13. Support for version

3.13 has been added in this release and support for 3.9 has been

dropped (following SPEC 0).

Please note that Python 3.13 is limited to PyPi for now, the conda-forge channel installs only provide support for Python 3.10 to 3.12.

Supported Operating Systems:

Upgrading to MDAnalysis version 2.8.0

To update with mamba (or conda) from the conda-forge channel run

mamba update -c conda-forge mdanalysis

To update from PyPi with pip run

python -m pip install --upgrade MDAnalysis

For more help with installation see the installation instructions in the User Guide.

Make sure you are using a Python version compatible with MDAnalysis

before upgrading (Python >= 3.10).

Notable changes

For a full list of changes, bugfixes and deprecations see the CHANGELOG.

Enhancements:

- Added

guess_TopologyAttrs() API to the Universe to handle attribute

guessing (PR #3753)

- Added the

DefaultGuesser class, which is a general-purpose guesser with

the same functionalities as the existing guesser.py methods (PR #3753)

- Introduce parallelization API to

AnalysisBase and to analysis.rms.RMSD class

(Issue #4158, PR #4304)

- Add

analysis.DSSP module for protein secondary structure assignment, based on pydssp

- Improved performance of PDBWriter (Issue #2785, PR #4472)

- Added parsing of arbitrary columns of the LAMMPS dump parser. (Issue #3504)

- Implement average structures with iterative algorithm from

DOI 10.1021/acs.jpcb.7b11988. (Issue #2039, PR #4524)

- Add support for TPR files produced by Gromacs 2024.1 (PR #4523)

Fixes:

- Fix Bohrium (Bh) atomic mass in tables.py (PR #3753)

- Catch higher dimensional indexing in GroupBase & ComponentBase (Issue #4647)

- Do not raise an Error reading H5MD files with datasets like

observables/<particle>/<property> (part of Issue #4598, PR #4615)

- Fix failure in double-serialization of TextIOPicklable file reader.

(Issue #3723, PR #3722)

- Fix failure to preserve modification of coordinates after serialization,

e.g. with transformations

(Issue #4633, PR #3722)

- Fix PSFParser error when encountering string-like resids

(Issue #2053, Issue #4189 PR #4582)

- Convert openmm Quantity to raw value for KE and PE in OpenMMSimulationReader.

- Atomname methods can handle empty groups (Issue #2879, PR #4529)

- Fix bug in PCA preventing use of

frames=... syntax (PR #4423)

- Fix

analysis/diffusionmap.py iteration through trajectory to iteration

over self._sliced_trajectory, hence supporting

DistanceMatrix.run(frames=...) (PR #4433)

Changes:

- Relicense code contributions from GPLv2+ to LGPLv2.1+

and the package from GPLv3+ to LGPLv3+ (PR #4794)

- only use distopia < 0.3.0 due to API changes (Issue #4739)

- The

fetch_mmtf method has been removed as the REST API service

for MMTF files has ceased to exist (Issue #4634)

- MDAnalysis now builds against numpy 2.0 rather than the

minimum supported numpy version (PR #4620)

Deprecations:

- Deprecations of old guessing functionality (in favor of the new

Guesser API)

-

MDAnalysis.topology.guessers is deprecated in favour of the new

Guessers API and will be removed in version 3.0 (PR #4752)

- The

guess_bonds, vdwradii, fudge_factor, and lower_bound

kwargs are deprecated for bond guessing during Universe

creation. Instead, pass ("bonds", "angles", "dihedrals") into

to_guess or force_guess during Universe creation, and the

associated vdwradii, fudge_factor, and lower_bound kwargs

into Guesser creation. Alternatively, if vdwradii,

fudge_factor, and lower_bound are passed into

Universe.guess_TopologyAttrs, they will override the previous

values of those kwargs. (Issue #4756, PR #4757)

-

MDAnalysis.topology.tables is deprecated in favour of

MDAnalysis.guesser.tables and will be removed in version 3.0 (PR #4752)

- Element guessing in the

ITPParser is deprecated and will be removed in version 3.0

(Issue #4698)

-

Unknown masses are still set to 0.0 for current version, this

will be changed in version 3.0.0 and replaced by

Masses “no_value_label” attribute (np.nan) (PR #3753)

-

A number of analysis modules have been moved into their own

MDAKits, following the 3.0 roadmap towards a trimmed down core

library. Until release 3.0, these modules are still

available through MDAnalysis.analysis (either as an import of the

MDAKit as an automatically installed dependency of the MDAnalysis

package or as the original code) but from 3.0 onwards, users must

install the MDAKit explicitly and then import it by themselves.

- The

MDAnalysis.analysis.encore module has been deprecated in

favour of the mdaencore MDAKit and will be removed in version

3.0.0 (PR #4737)

- The

MDAnalysis.analysis.waterdynamics module has been deprecated in favour

of the waterdynamics MDAKit and will be removed in version 3.0.0 (PR #4404)

- The

MDAnalysis.analysis.psa module has been deprecated in favour of

the PathSimAnalysis MDAKit and will be removed in version 3.0.0

(PR #4403)

- The MMTF Reader is deprecated and will be removed in version 3.0 as

the MMTF format is no longer supported (Issue #4634).

Author statistics

This release was the work of 22 contributors, 10 of which are new contributors.

Our new contributors are:

Acknowledgements

MDAnalysis thanks NumFOCUS for its continued support as our fiscal sponsor and

the Chan Zuckerberg Initiative for supporting MDAnalysis under EOSS4 and EOSS5 awards.

— @IAlibay (release manager) on behalf of the MDAnalysis Team

03 Nov 2024

Have you ever wanted to analyze sub-picosecond dynamics in your trajectories? Trajectory file sizes too large? Want to sync up your analysis and trajectory production? Lucky for you MDAnalysis, in conjunction with Arizona State University (ASU) and with the support of a CSSI Elements grant from the National Science Foundation, is holding a free, online developer workshop focused on streaming and inline analysis of molecular simulations on December 4th 2024.

The general idea of streaming, just like with Netflix, is to transfer data piece-by-piece as needed instead of transferring entire files. In our case, the data generated during a running simulation is transmitted to MDAnalysis for processing without ever being stored on disk.

Our streaming interface is built on top of the TCP/IP socket protocol and can transmit data between distinct processes: A) on the same computer; B) on different computers in a local network; C) via the internet.

This allows analyzing MD simulation trajectories live while they are being generated. As a result, the streaming interface allows analyzing data at femtosecond-scale time intervals which would create massive trajectories and slow down the simulation engine if written to disk.

This online workshop is intended to introduce participants to streaming of trajectories directly from simulation engines, inline analysis

of simulations, and all the awesome science you can do with streaming. This workshop is suitable for students, developers, and researchers in the broad area of computational (bio)chemistry, materials science, and chemical engineering. It is designed for those who have some familiarity with MDAnalysis and are comfortable working with Python, Jupyter

Notebooks and a molecular simulation engine such as LAMMPS, GROMACS or NAMD.

Workshop Overview

The program will run from 8:00 am to 12:00 pm Pacific time on Wednesday, December 4th.

In the workshop, we will focus on contextualizing MD streaming, showing you some of its use cases from working as basic connective tissue to advanced, high-time-resolution analyses, and getting your hands dirty with streaming in a live-coding activity in an easy-to-use workshop environment.

| Topic |

Duration |

| 👋 Welcome |

5 min |

| 📦 MDAnalysis mission & ecosystem |

15 min |

| 🖼️ Streaming: big picture |

15 min |

| 👀 Streaming: first look |

10 min |

| ❓ Q&A: Streaming overview |

5 min |

| 📦Streaming: MD packages, IMDClient |

15 min |

| 👀 Demo: Multiple analyses on NAMD simulation stream |

10 min |

| 💤 Break |

10 min |

| 🎯Activity: Write your own stream analysis |

40 min |

| 📦 Streaming: MDAnalysis functionality |

10 min |

| ❓Q&A: Streaming with MDAnalysis |

5 min |

| 👀 Application: Velocity correlation functions and 2PT |

10 min |

| 👀 Application: Ion channel permeation |

10 min |

| ❓ Q&A: Applications |

5 min |

| 🔮 Future direction |

5 min |

| 📖 Open Forum |

20 min |

| 🚪 Closing |

5 min |

Registration

Attendance at this workshop will be free, and we encourage anyone with an interest in attending to register below.

Register

Workshop materials

All materials are made available in the github.com/MDAnalysis/imd-workshop-2024 repository.

Prepare for the interactive workshop activities by following the set-up instructions.

If you have any questions or special requests related to this workshop, you may contact the organizing committee.